researchers from MIT, Google, and elsewhere have designed a system that can verify when quantum chips have accurately performed complex computations that classical computers cant. They trace each computing path for correct behavior. This modular verification reduces the complexity of validation and speeds up verification. This will speed up validated quantum chip design and speed up checking on experiments.

Full-scale error-corrected quantum computers will require millions of qubits. In the past few years, researchers have started developing Noisy Intermediate Scale Quantum (NISQ) chips, which contain around 50 to 100 qubits. This is enough to demonstrate quantum advantage, meaning the NISQ chip can solve certain algorithms that are beyond classical computers. Verifying that the chips performed operations as expected, however, can be very inefficient. The chips outputs can look entirely random, so it takes a long time to simulate steps to determine if everything went according to plan.

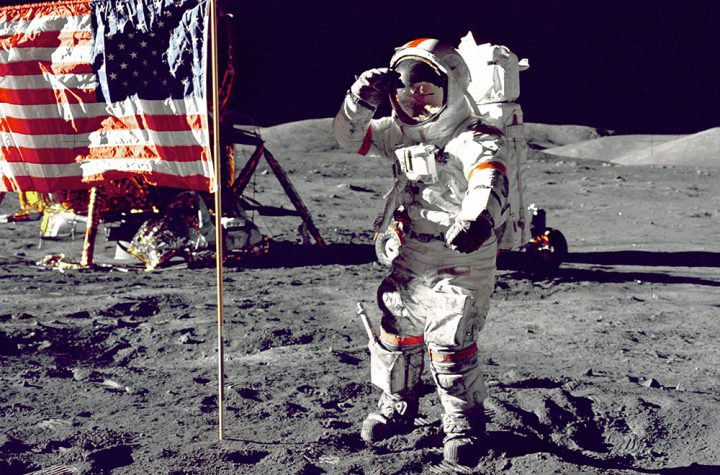

Above- Researchers from MIT, Google, and elsewhere have designed a novel method for verifying when quantum processors have accurately performed complex computations that classical computers cant. They validate their method on a custom system (pictured) thats able to capture how accurately a photonic chip (PNP) computed a notoriously difficult quantum problem. Image: Mihika Prabhu

They trace an output quantum state generated by the quantum circuit back to a known input state. This reveals which circuit operations were performed on the input to produce the output. Those operations should always match what researchers programmed. If not, the researchers can use the information to pinpoint where things went wrong on the chip.

In experiments, they successfully ran a popular computational task used to demonstrate quantum advantage, called boson sampling, which is usually performed on photonic chips. In this exercise, phase shifters and other optical components will manipulate and convert a set of input photons into a different quantum superposition of output photons. Ultimately, the task is to calculate the probability that a certain input state will match a certain output state. That will essentially be a sample from some probability distribution.

Brian Wang is a prolific business-oriented writer of emerging and disruptive technologies. He is known for insightful articles that combine business and technical analysis that catches the attention of the general public and is also useful for those in the industries. He is the sole author and writer of nextbigfuture.com, the top online science blog. He is also involved in angel investing and raising funds for breakthrough technology startup companies.

He gave the recent keynote presentation at Monte Jade event with a talk entitled the Future for You. He gave an annual update on molecular nanotechnology at Singularity University on nanotechnology, gave a TEDX talk on energy, and advises USC ASTE 527 (advanced space projects program). He has been interviewed for radio, professional organizations. podcasts and corporate events. He was recently interviewed by the radio program Steel on Steel on satellites and high altitude balloons that will track all movement in many parts of the USA.

He fundraises for various high impact technology companies and has worked in computer technology, insurance, healthcare and with corporate finance.

He has substantial familiarity with a broad range of breakthrough technologies like age reversal and antiaging, quantum computers, artificial intelligence, ocean tech, agtech, nuclear fission, advanced nuclear fission, space propulsion, satellites, imaging, molecular nanotechnology, biotechnology, medicine, blockchain, crypto and many other areas.

More Stories

US star Megan Rapinoe tells BBC Sport about how taking a knee jeopardised her international career, and what the future holds for women’s football.

Australia’s corporate watchdog is in limbo as the Morrison government awaits the Thom review before deciding the fate of both ASIC and its chairman, James Shipton.

Researchers at Columbia Engineering found that alkali metal additives, such as potassium ions, can prevent lit